The House of Cards Problem: What Cable TV Taught Me About Streaming Data Quality

A 15-year cable analytics veteran on why streaming data quality failures stay invisible until they aren’t — and what it costs when the model breaks.

Key Takeaways

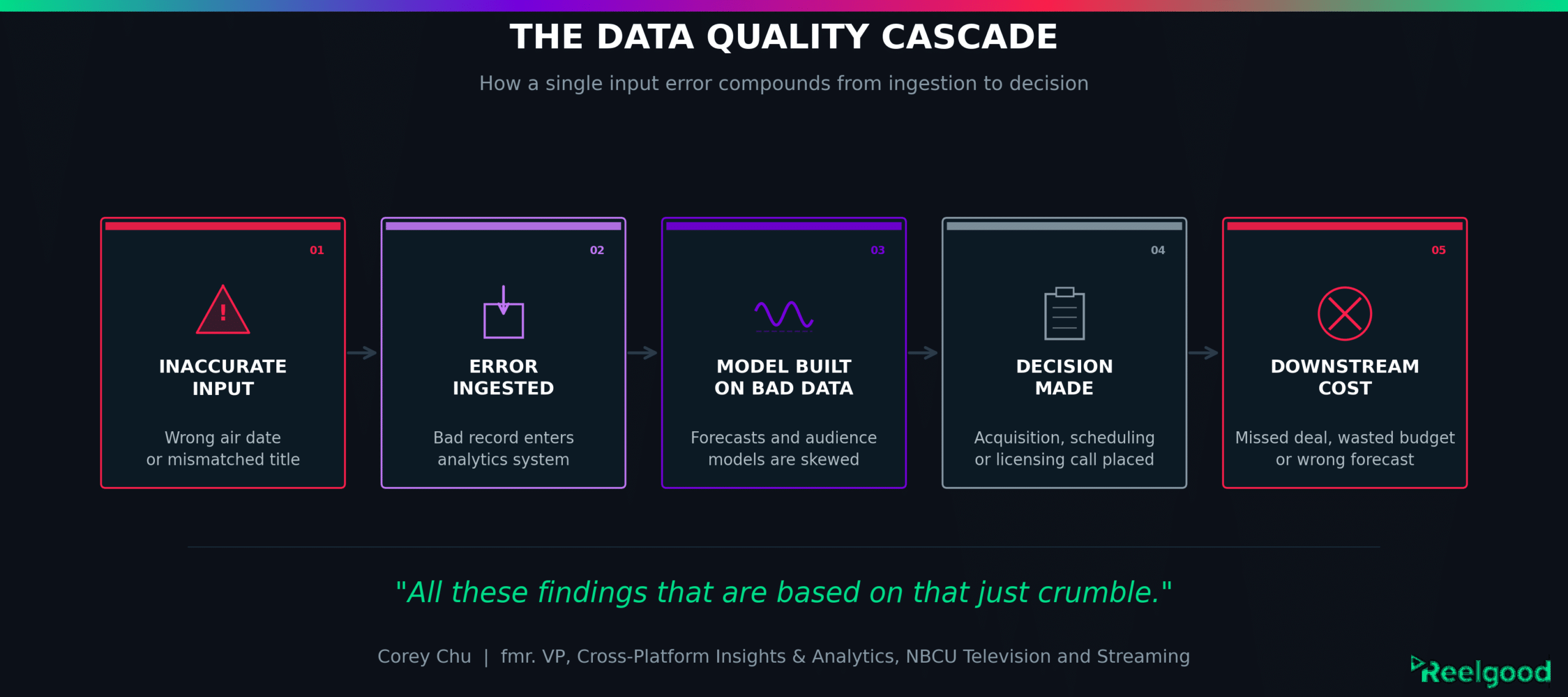

- Data quality failures in streaming entertainment analytics rarely announce themselves at the point of entry. The errors surface downstream, when forecasts don’t hold up and decisions have already been made.

- Content entity resolution — matching a TV or movie title consistently across different systems, vendors, and manual input formats — remains a persistent, underestimated gap at large media organizations.

- Senior leaders are often shielded from data quality problems by the teams producing their reports. The charts look clean. The underlying data may not be.

A show’s first air date is logged as October 2nd. It actually aired October 1st. The difference is one day. Performance reports get sent out. The findings are inserted into a time-sensitive presentation for executive leadership. Forecasts are projected. Programming decisions get made. Press releases are written.

Then someone notices the discrepancy. A call goes to the vendor. The data gets reprocessed.

And everything built on it falls apart. “All these findings that are based on that,” says Corey Chu, formerly Vice President, Cross-Platform Insights & Analytics at NBCU Television and Streaming, “just crumble.”

The reprocessing will take weeks at best. You know the corrected numbers will be bigger because you’re missing the first day of data (which is typically the most watched), but you don’t know how much bigger, and you don’t know if that difference would be material enough to change any of your findings and the decisions that have been made in response to them.

And if you’re trying to have an apples-to-apples comparison with other shows across a period of time like the first 7 days, you need to absolutely know you’re consistently looking at the first 7 days for each respective show so that you’re fairly comparing everything; the initial comparison ended up underrepresenting that show’s audience.

But you’re at least grateful you found the discrepancy. And it’s easier to find them when you’re looking at only a handful of shows, but if it’s 100 or 100,000 shows then you may not be able to find them.

It’s like looking for a needle in a haystack when you don’t know how many needles to look for, let alone if there are any needles at all to find. You also don’t know the “size” of the needles – the discrepancies could come down to the number of days, number of episodes, episode title, or what platform(s) it’s been on.

Chu spent 15 years at NBCU, most recently leading analytics and forecasting for six cable entertainment brands across linear and Peacock, with a focus on performance insights, content valuation, acquisitions recommendations, and audience targeting.

He has worked on more projects built on imperfect source data than he cares to count. His observation, offered matter-of-factly, captures something the streaming industry has been slow to confront at the strategic level.

The people managing the data — the analysts, data scientists, and researchers — know this problem intimately. The executives making decisions based on the outputs often don’t. That gap between the boardroom and the back room is where a lot of money quietly disappears.

The Entity Resolution Problem Nobody Talks About at the Top

The cleanest example of data fragility in large analytics operations is also the most mundane.

“You’re going to get 20 different ways to describe the second X-Men movie,” Chu says. “Data scientists came in and started linking everything together using IMDb as a source of truth. But there’s always that remaining 3% that they’re not able to automatically link.”

Three percent sounds small.

But in a catalog of tens of thousands of titles, it’s hundreds of records.

And those records are almost always the edge cases that matter most: the obscure library title that just became relevant for acquisition, the foreign co-production with inconsistent transliteration across sources, the reboot whose title nearly matches its predecessor.

For example, horror franchises love repeating the same exact title: Halloween (1978, 2007, 2018), Friday the 13th (1980, 2009), Scream (1996, 2022), and Scary Movie (2000, 2026).

This is the content entity resolution problem: normalizing, deduplicating, and correctly matching titles across disparate systems, vendors, and manual inputs at scale.

It has been a known challenge in media data for years.

What has changed is the surface area.

As streaming platforms have expanded catalogs into the hundreds of thousands, and pipelines have multiplied across SVOD, AVOD, TVOD, and TVE business models, the number of places a mismatch can occur has grown faster than the tools designed to handle it.

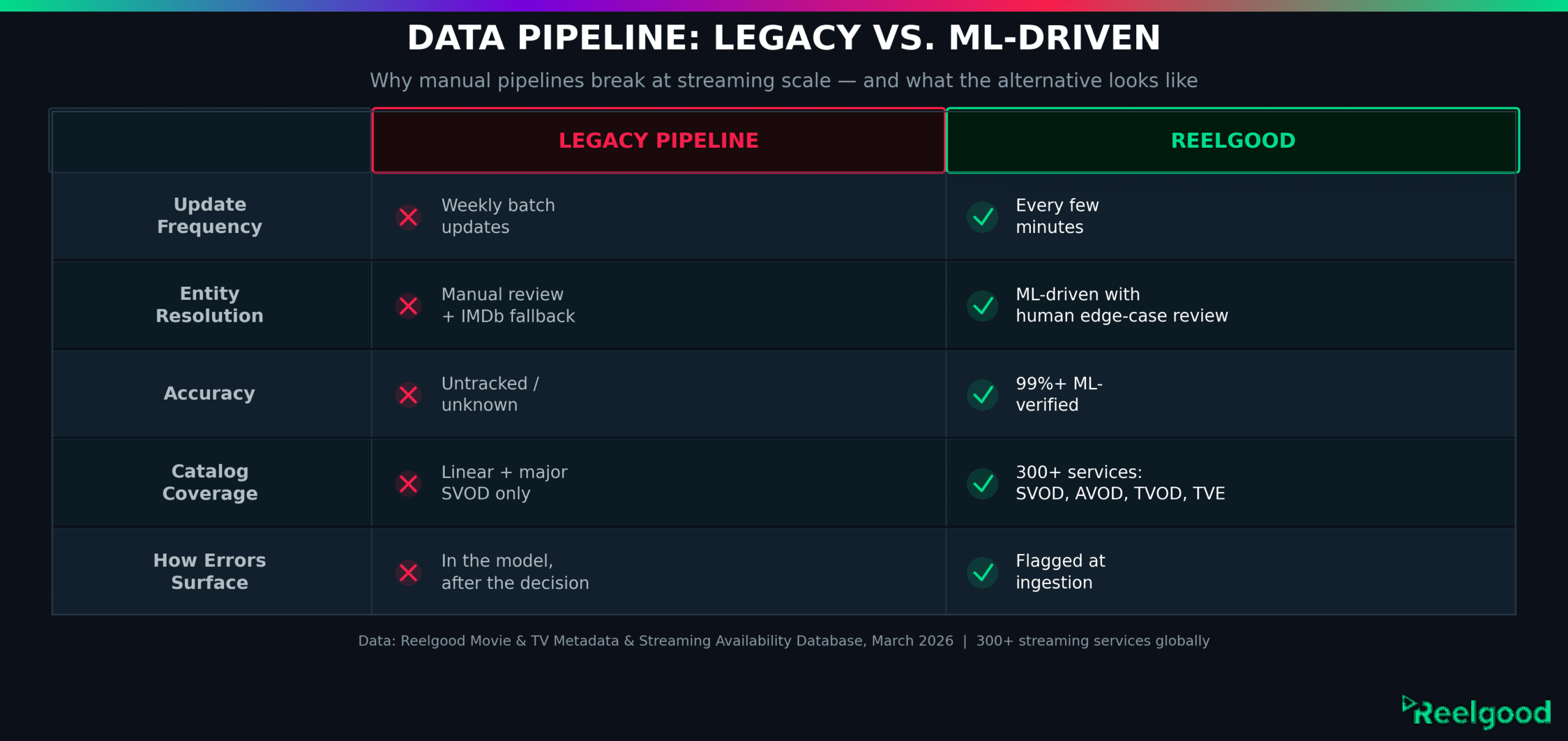

Legacy approaches rely heavily on manual review and reference databases like IMDb. Both have limits.

IMDb’s coverage is strong for widely-released titles and thinner for regional content, older library acquisitions, and titles where official naming conventions aren’t standardized across territories. Manual review doesn’t scale.

This is the problem Reelgood’s ML-driven content matching system was built to solve.

Rather than relying on exact-string matching or a single reference database, machine learning identifies and de-duplicates titles across sources regardless of naming variation. The result is 99%+ accuracy across over 4 million movie and TV titles, updated continuously across 300+ streaming services, not in overnight batches.

The Downstream Cascade

Entity resolution is a front-end problem. The date-shift scenario is a different kind of failure, and in some ways harder to catch.

When viewership data is processed with the wrong air date, the error doesn’t announce itself. The data looks complete. The models accept it. Reports go up the chain.

It’s only when someone spots an anomaly — or when the vendor reprocesses — that the cascade becomes visible and the findings built on that date collapse.

This matters most in predictive modeling.

Analytics teams routinely build forecast models for new content: how will a film perform in its first window? How does a procedural perform in Q1 versus Q2?

Chu notes that seasonality makes a measurable difference – colder weather in Q1 correlates with higher linear viewership, a variable that has to be explicitly built into models and calibrated against accurate historical data. Meanwhile for Q,2 people will more likely be out of their homes (and not watching TV) as the weather gets warmer and there’s more sunlight in the day.

When the records feeding those models are off by even a date or a title match, the calibration drifts. The error surfaces after the decision has already been made.

“Ten years ago, a source of truth was a lot easier to find,” Chu says. “Nowadays it’s becoming increasingly difficult.”

Catalog fragmentation across dozens of streaming services, business models, and territories has only widened that gap.

The velocity of the streaming catalog makes this harder. As our analysis of the streaming measurement gap found, most services lack real-time visibility into their own catalog state, let alone their competitors’.

A title available on a platform last Thursday may not be there today. Analytics built on weekly batch updates carries that lag forward into every model that depends on it.

The Boardroom Blind Spot

Here is the part of the problem that makes it structurally hard to fix: the people closest to the data quality issues are rarely the ones with budget authority to address them.

“A senior leader who doesn’t work in research or data science — they’re going to assume that the data they get from these teams is always good,” Chu says. “They’re the audience to a presentation like an audience would be to a movie. They’ll appreciate the work, but they won’t be privy to all the details of the editing, rehearsals, and backstage discussions needed to get to the final product.”

Research teams, data scientists, and analytics managers know exactly what’s holding the model together. They’re the ones placing calls to vendors, running manual disambiguation scripts, and reconciling the 3% that doesn’t auto-link. They understand precisely what the charts would look like if the underlying data were clean.

But they’re also the people whose job is to produce the charts. So the charts look good. The gaps stay in the back room.

This is a structural problem, not a personnel one.

The analytics function in a large media company is designed to produce reliable outputs. When it succeeds at that by compensating for bad inputs, it effectively shields leadership from the cost of the underlying problem. The business case for better metadata infrastructure often has to be made in the language of cost savings because the errors themselves aren’t visible to the people who control the budget. “I think the thing senior leaders probably would understand the most,” Chu says, “would be the cost savings.”

What a Reliable Foundation Actually Requires

The answer isn’t simply “better data.” It’s a different architecture.

Legacy data vendors in this space were built for a linear television era.

Manual data entry was viable when catalog sizes were measured in thousands of titles and updates were weekly. Streaming changed that.

A platform with tens of thousands of titles across SVOD, AVOD, and TVOD windows — with availability changing daily — cannot be managed with batch processing and exception tickets.

ML-driven pipelines handle this at scale.

Rather than a team of editors manually verifying each title, machine learning handles entity resolution, de-duplication, and title availability tracking, and flags exceptions for human review. The human team handles the edge cases the model can’t confidently resolve, not the entire catalog.

Reelgood built this infrastructure to solve its own problem first: a consumer streaming guide cannot survive on inaccurate data. When a user clicks play and the title isn’t where the guide says it is, they leave.

That requirement set a higher accuracy bar than most B2B data vendors had been held to. When companies including Google and Apple evaluated Reelgood’s data against alternatives, Reelgood won every evaluation.

That accuracy standard is what now powers Reelgood’s B2B data products: title availability and metadata across 300+ services, updated every few minutes, covering every streaming window type. Teams at companies including Apple, Google, Warner Bros. Discovery, and Tubi use the same infrastructure to support content and catalog decisions — the kind of decisions that benefit most from a foundation that doesn’t collapse when you put weight on it.

For analytics and data science teams who have built their models around whatever source of truth was available, the question is worth sitting with: how confident are you that what’s feeding the model is actually accurate?

If you work in streaming analytics, data science, or content acquisition:

If any of what Corey describes sounds familiar, we’d be glad to show you what Reelgood’s data looks like against your current sources. We can provide sample data for your catalog or a specific set of titles, including normalized entity IDs (IMDb, TMDB), full metadata fields, and historical availability records.

For teams evaluating data for content acquisition, rights management, or competitive catalog intelligence, see all the streaming use cases where teams currently apply this data in practice. If you’re considering alternatives to your current metadata vendor, this page is a useful starting point.

Reach us at sales@reelgood.com or through the contact form at data.reelgood.com.

The perspectives in this piece were drawn from a conversation with Corey Chu, formerly Vice President, Cross-Platform Insights & Analytics, NBCU Television and Streaming, where he spent 15 years leading programming analytics and forecasting across cable entertainment brands.

Data: Reelgood Movie & TV Metadata & Streaming Availability Database, March 2026. Coverage includes 300+ streaming services globally, updated every few minutes.